Just months after Google DeepMind unveiled Gemini â its most capable AI model ever â the London-based lab has released its compact offspring: Gemma.

Named after the Latin word for âprecious stone,â Gemma is a new family of open models for developers and researchers.

âDemonstrating strong performance across benchmarks for language understanding and reasoning, Gemma is available worldwide starting today,â Sundar Pichai, the CEO of Google, said on Twitter.

Gemma comes in two sizes â 2 billion and 7 billion parameters. Each of them has been released with pre-trained and instruction-tuned variants.

The lightweight models are descendants of Gemini. As a result, Gemma has inherited technical and infrastructure components from its parent, which enables âbest-in-class performance,â Google said.

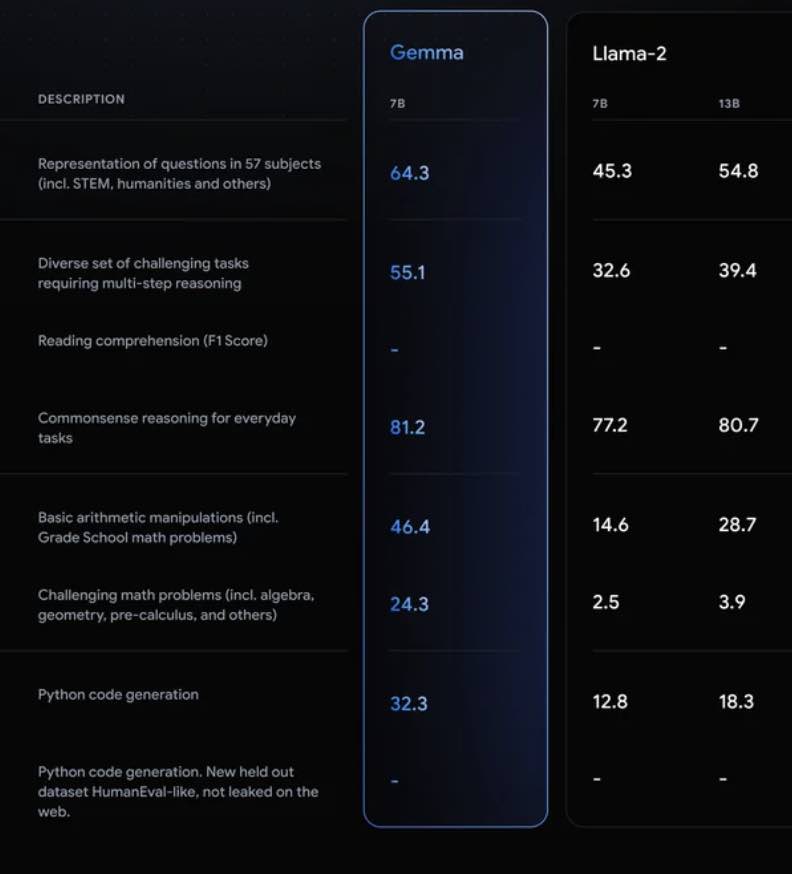

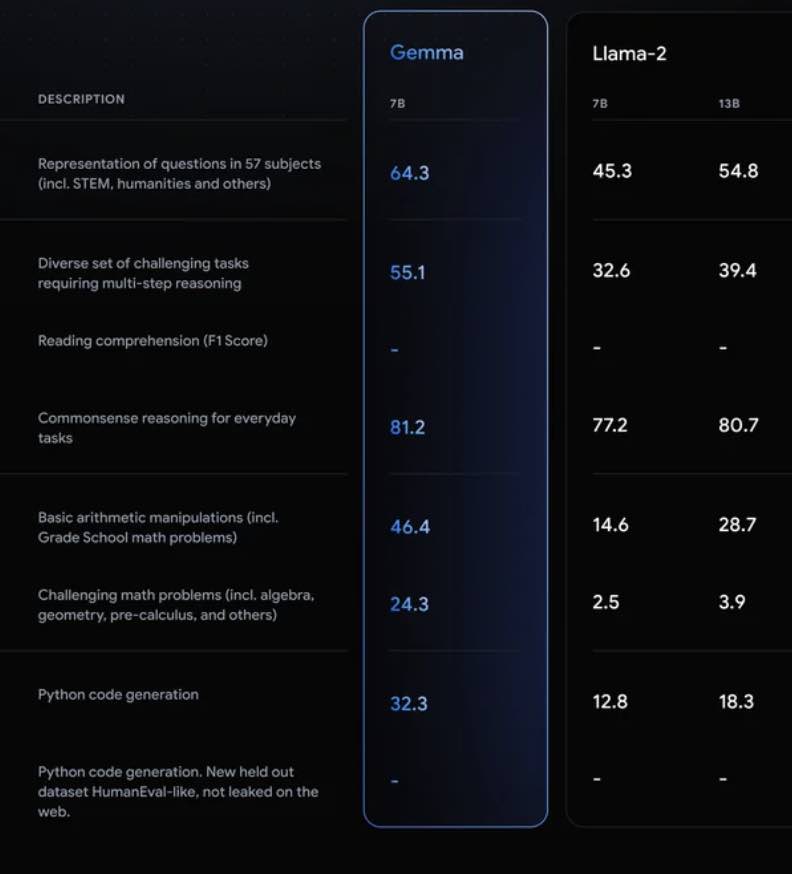

As evidence, the tech titan revealed some eye-catching comparisons with Llama-2, a family of large language models (LLMs) released by Meta a year ago.

Gemma is built to run directly on a developer laptop or desktop computer. Alongside the model weights, Google has also released a new Responsible Generative AI Toolkit to support safe use of the system.

Devs and researchers can start experimenting with Gemma now. Itâs available for âresponsible commercial usage and distributionâ by any organisation, Google said.

To try the models out for yourself, visit the Gemma website.